Cull Groups Online

Facebook was a place to catch up with friends, join groups, or see cute baby animals. Today, nearly all the “fun content” pages on every social media platform are AI generated, pushing “slop”. Building followers and rank with fake content, then selling the account to malignant actors, is an industry with real consequences, from fraud to elections. Fight back by focusing your online attention on real people, producing valuable content.

Why we do it:

Debate rages on whether generative AI is our salvation or our destruction. Few land in the middle, where the truth usually resides. What is clearly bad is this: it is being heavily leveraged to manipulate social media and even news framing. If Russia’s efforts in 2016 put a thumb on our electoral scale, we now face a firehose to the face.

To understand how online actors manipulate public perception in elections, we strongly recommend Maria Ressa’s How to Stand up to a Dictator. In short, bad actors can spread information much more quickly than those bound to the truth. A recent change to X revealed high-profile right-wing accounts as based in other nations—AI didn’t invent this problem, but it accelerates it. In the past year AI “slop” has exploded. These are the stories you share with “probably not real, but I kinda want it to be!” or “I had no idea that’s what a baby peacock looked like!” (because it doesn’t.) It’s getting subtler, too. I recently fell for bird content that a bird expert friend said “felt a little off” but had no clear tells. My warning should have been how perfectly the bird’s outrageous behaviors were caught from just the right camera angle, every time. It’s that subtle now, sometimes, but when I finally searched “is (account) AI” I got quick confirmation.

These feel harmless or even fun, but they fuel our disconnect from reality at best, and at worst they build rank for accounts that are then sold on the dark web to fraudsters and political operatives alike. We fight back by siloing that content: isolate it into an AI fantasy world of its own where fake accounts consume fake content while we shun it. If we do that, it’ll proselytize to itself next election cycle, feeding a more and more unrealistic view that’s easier to filter. (This is not a theory — AI fed it’s own output develops a mad-cow like dysfunction.)

How to do it:

The simplest approach is to leave or unfollow any group sharing AI stories. If you identify a few, you’re likely missing others which means you can’t trust any of the content. If someone you follow shares an AI post, block the original poster. If it happens regularly, unfollow or silence the person sharing slop. It’s impossible to vet every post, so clean up your wall to reduce the slop you have to wade through.

For a more aggressive approach, go through your groups checking these elements:

- age of group (the more recent the riskier)

- are there rules/questions new members answer?

- is there an AI policy?

- are there named/interactive admins you can identify? (This is one reason Shasta put her real face and name on this site. Trust requires trust.)

- is the content mostly real or mostly fake?

- is the group cashing in on a name brand that isn’t theirs? Look for signs the group is a “fan group” or otherwise not official. e.g. There are dozens of “David Attenborough” groups that aren’t.

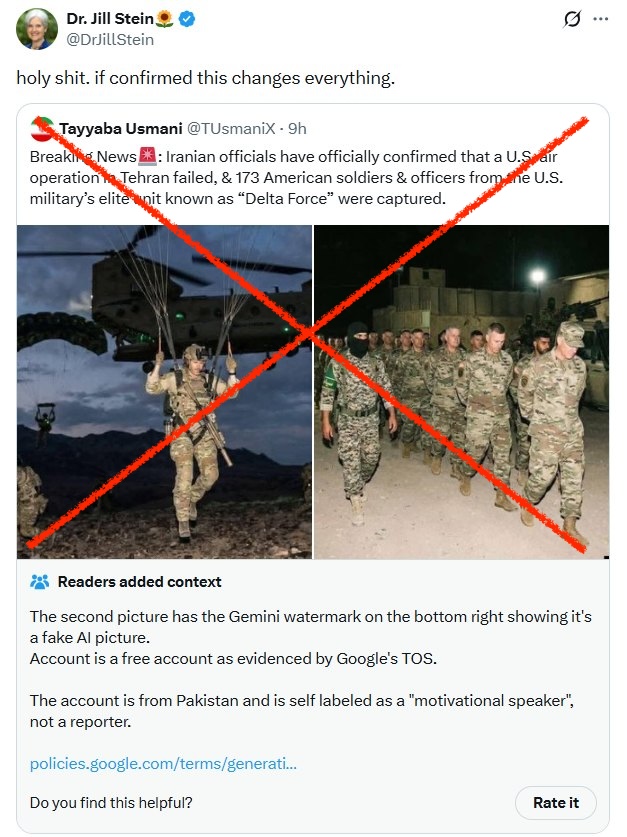

- are the posts even plausible? For example, the AI fake shared by Jill Stein in the image above is entirely implausible. If Iran had captured hundreds of U.S. service members, Iran would be bragging, and it would be international news.

For the most restrictive approach, refuse to follow/join any group you can’t positively trace to real humans. Be particularly suspicious of groups that are recent and grew quickly. It takes time to build real accounts. Having ten thousand of your closest bot friends boost you definitely helps.

By routinely suppressing the distribution of AI content, we devalue it and discourage this tactic.

We’re building a list of real minority voices you can follow with confidence, if you need to replace bad options with diverse ones: